Voice-First Is No Longer Optional. It’s Structural.

Voice-first design is transforming how institutions connect with customers. No longer a novelty or add-on, conversational AI is becoming core infrastructure for modern engagement. Organizations that design for natural dialogue and contextual understanding are redefining expectations, while those relying on static interfaces risk fading into the background.

When did speaking to an institution start feeling less like navigating a system and more like being understood?

Let’s say a prospective student standing outside after school, juggling deadlines, expectations, and uncertainty about the future. Instead of digging through a maze of web pages or waiting on hold, a simple, natural question is spoken aloud:

What are the admission requirements? Is financial aid available? When is the deadline?

The AI voice agent on the other end doesn’t just recite generic information. It understands context, the intended major, location, academic background, and responds clearly.

It not only outlines next steps, but also explains scholarship options, and even schedules a follow-up conversation with an admissions counselor. In minutes, confusion turns into clarity.

It doesn’t feel like interacting with a system. It feels like being guided.

That’s the quiet revolution unfolding right now. Voice-first design and conversational AI are no longer experimental add-ons to existing platforms. They are fundamentally rearchitecting how institutions and the people they serve relate to one another. The interface fades into the background. The conversation becomes the experience.

For organizations building or designing customer journeys in 2026, this shift isn’t optional. It’s structural.

Institutions that design for natural dialogue and contextual understanding will redefine expectations. Those that rely on static forms and fragmented touchpoints risk becoming invisible in a world that increasingly communicates by simply speaking.

The most powerful transformations rarely announce themselves. Sometimes, they begin with a single question about college admission, and a system that knows exactly how to respond.

But to understand why this shift matters, we need to rethink what “voice-first” actually means.

Voice-First Is a Philosophy, Not a Feature

Before anything else, let me clear up a common misconception. Voice-first doesn't mean voice-only. It doesn't mean ripping out your chat interface and replacing it with a microphone icon. What it actually means is designing experiences where voice is a primary, natural interaction mode, supported and enriched by text & visuals.

It’s like being a bilingual professional. The best communicators don't force every conversation into one language, they switch fluidly based on context. Voice-first design works the same way, it leads with what's most natural for the human, and the interface adapts.

The design philosophy here goes deeper than microphone placement. Voice-first UX requires:

- Rethinking feedback loops - ensuring the system communicates clearly and guides users naturally.

- Error recovery - gracefully handling misunderstandings without breaking the conversation.

- Latency tolerance - designing for natural pauses and pacing that feel human.

- Conversational flow from the ground up - structuring interactions sequentially, so every response builds logically.

Unlike a screen where a user can backtrack and explore non-linearly, a voice interaction is inherently sequential and ephemeral. If your system stumbles, there's no scrollback to save the moment. The experience lives and dies in real time.

In short, voice-first is a design philosophy, not a feature toggle. It requires rethinking your entire interaction model, not just adding a “talk to us” button.

The Four Forces Powering the Voice AI Breakthrough

If voice interfaces have existed since the early days of Siri and Alexa, why is this moment different?

Because four things converged at once.

1. Technology maturity

Large Language Models got good enough. The jump from rule-based voice systems to LLM-powered conversational agents is the difference between a phonebook and a conversation. Modern voice AI can handle ambiguity & context switching in ways that would have seemed like science fiction five years ago.

2. Consumer behavior shift

Consumer comfort crossed a threshold. What once felt experimental is now normalized, as ecosystems built by companies like Amazon, Google, and Apple have trained consumers to speak naturally to devices. Users are increasingly comfortable transacting, resolving issues, and making decisions through voice interfaces, particularly when trust signals are strong and the stakes of a mistake feel low.

3. Business strategy evolution

Competitive advantage is shifting toward hybrid voice models. Businesses that combine AI-driven voice automation with seamless human escalation are seeing gains in efficiency and accessibility. Voice systems now handle repetitive, high-volume inquiries around the clock, while human agents focus on complex or emotionally sensitive interactions. The result isn’t replacement, it’s augmentation, where automation improves speed and scale, and people preserve trust and nuance.

4. Environmental readiness

Hardware proliferation reached saturation. Smart speakers, voice-enabled phones, connected cars, wearables, and environments where voice is the only practical input have expanded dramatically. Customers are already living in voice-first contexts, in fact, they’re waiting for your product to catch up.

In a nutshell, this isn't a trend driven by novelty. It's a structural shift driven by capability & behavior converging simultaneously.

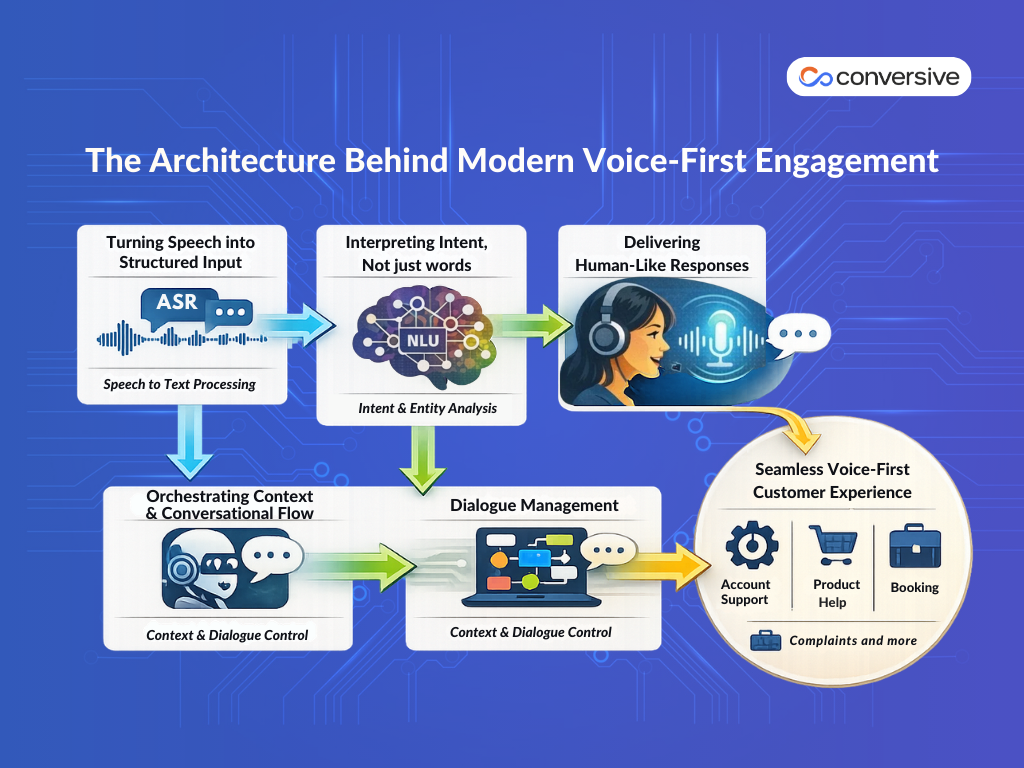

The Architecture Behind Modern Voice-First Engagement

A well-built voice-first customer engagement system typically has several interlocking components working in tandem:

1) Turning Speech into Structured Input

Automatic Speech Recognition (ASR) converts spoken input to text with high accuracy across accents, background noise, and domain-specific vocabulary. The technical baseline here has improved enormously, but domain tuning still matters significantly for enterprise contexts.

2) Interpreting Intent, Not Just Words

Natural Language Understanding (NLU) extracts intent and entities from what was said. This is where most early voicebot implementations fell apart, they recognized the words but didn't understand the meaning. Modern NLU layers powered by transformer-based models are substantially more robust.

3) Orchestrating Context and Conversation Flow

Dialogue Management tracks conversation state, handles multi-turn interactions, manages context across topics, and decides what to do next. This is the orchestration layer that separates a capable voice agent from an annoying one.

4) Delivering Human-Like Responses

Natural Language Generation (NLG) and Text-to-Speech (TTS) determine what gets said back and how it sounds. Voice tone, pacing, prosody, the rhythm and melody of speech, significantly affect whether an interaction feels natural or robotic.

When these components are well-integrated and trained on domain-relevant data, the result is an agent that can handle complex customer journeys end-to-end:

- account queries

- product troubleshooting

- booking

- complaint escalation, and more.

GlobeNewswire reports that 75% of customers prefer businesses that offer support in their native language. As companies expand globally, multilingual service shifts from optional to essential for maintaining trust and competitive relevance.

Hence the takeaway is, building a voice AI agent isn't a voice layer on top of a chatbot. It's an entirely different engineering and design challenge that requires thinking in terms of acoustic UX, not screen UX.

Voice AI is Transforming High-Volume, Customer-Facing Industries

Voice-first adoption is playing out in measurable ways across industries where volume and customer expectations collide. The most forward-leaning sectors are deploying voice AI not as an experiment, but as core infrastructure.

Financial Services

Banks and insurance companies are deploying voice AI for account management, fraud alerts, and claims intake. The reduction in average handle time is significant, but the more interesting metric is first-contact resolution, the percentage of issues completely resolved in a single interaction. Voice AI, when well-designed, consistently improves this because it eliminates the asynchronous gaps of email or chat and reduces handoff friction.

Healthcare

Voice agents are helping patients schedule appointments to navigate post-discharge instructions, and manage medication reminders. In a context where human agent availability is chronically constrained, this matters enormously. It's also worth noting that many patients find voice interaction more comfortable for sensitive topics than typing, there's less cognitive friction to just saying something out loud.

Education

Voice AI is revolutionizing education by streamlining administrative tasks, supporting students, and enabling personalized learning. Schools and universities can use it to handle inquiries, scheduling, and discipline tracking, driving measurable efficiency. Case studies highlight lower no-show rates, reduced paperwork, and cost savings.

Real estate

Real estate agencies and property management firms are adopting voice AI for high-volume tasks like scheduling viewings, answering property inquiries, qualifying leads, multilingual engagement and property management. Agencies using these tools report a 90% reduction in response time and up to a 45% increase in lead conversion. Some firms see an 80% reduction in operational costs compared to human-staffed call centers.

Customer service

Customer service teams are under more pressure than ever. Expectations are rising, ticket volumes are at record highs, and one bad experience can cost you a customer, yet traditional support models are expensive and hard to scale. That’s why leaders are investing in AI. The key is focusing on the metrics that matter, and using Voice AI to improve them efficiently.

In a nutshell, the strongest use cases for voice AI are the high-volume, repetitive, time-sensitive interactions that frustrate customers and burn out human agents.

Voice Interface Design Principles

Creating a great voice experience isn’t just about having the latest speech recognition engine, it’s about thoughtful design. These principles focus on how users actually interact with voice, so your assistant feels intuitive & helpful.

1) Lead with confirmation, not assumption

Voice input is inherently ambiguous. Customers speak with regional accents, background noise, speech clarity issues and imprecise phrasing. A good voice assistant doesn't just guess what you're saying and act on it right away. Instead, it checks to make sure it understands you correctly before doing something important, like making a payment or booking a reservation.

User: Book a flight for next Friday to New York.

Voice Agent: Did you mean next Friday, April 28th, for a flight to New York?

2) Design for graceful failure, not just happy paths

The happy path is where a user says exactly what the system expects, clearly, on the first try. In reality, that's probably 40% of interactions on a good day. The other 60% involve misheard words, topic pivots, corrections, and confusion. Because of that, you need to focus not just on making the ideal situation work smoothly, but also on designing how the system handles voice AI agents failures.

User: Book a flight to Chicago… oh wait, do you know the weather there?

Voice Agent: I can help with flights or weather. Which would you like to do first?

3) Respect cognitive load

Human working memory is limited. A voice agent that gives a long list of choices and warning notes all at once, the user will likely stop paying attention after the second item. The best voice experiences are conversational in the truest sense, they're dialogues, not monologues. Keep responses short. Let the user drive.

User: Can you help me find a restaurant nearby?

Voice Agent: Sure, do you want Italian, Chinese, or Mexican?

4) Build in natural-sounding prosody

Flat, robotic TTS is one of the fastest ways to break trust in a voice interaction. Modern synthesis engines offer substantial control over pace, pitch, and emphasis. Use it. An agent that sounds natural is an agent that feels trustworthy.

User: My flight got canceled.

Voice Agent: I’m really sorry that happened!

Voice Agent: Let’s see what alternatives are available for you.

Voice Agent: I’ll do my best to get you on the next flight.

5) Provide context and continuity

A good voice assistant remembers the conversation and avoids making the user repeat themselves unnecessarily. It keeps track of context across turns to make interactions smoother.

User: Book a table for two at an Italian restaurant tonight.

Voice Agent: Sure, do you want the same 7:30 PM slot as last time?

6) Allow user control and exit paths

Users should feel in control at all times. Always offer clear ways to cancel, go back, or clarify instructions. This prevents frustration when the assistant misunderstands.

User: Play some music.

Voice Agent: Playing your top hits. You can say ‘stop’ or ‘skip’ anytime.

7) Design for accessibility

Consider users with speech impairments, hearing issues, or non-native accents. Making your voice experience inclusive broadens usability and trust.

The most common voice UX mistakes aren't technical, they're about failing to design for how humans actually communicate rather than how we imagine they should.

Ethical Considerations for Voice-First AI Adoption

With technology reaching unprecedented levels of efficiency, companies must rethink not only how they serve customers, but also how responsibly they do so. AI voice agents now achieve 98% first-call resolution rates compared to 71% industry average, fundamentally changing customer service economics.

As voice AI becomes infrastructure, ethics becomes architecture. Here are the four ethical fault lines every organization must address:

I) The consent problem

When a customer interacts with a voice agent, do they know they're talking to AI? Disclosure isn't just ethically right, it's increasingly legally required in multiple jurisdictions. Failing to disclose isn't a strategy, it's a liability.

II) The bias problem

ASR systems have historically performed worse on certain accents and speech patterns, which means they've systematically failed certain demographic groups. A voice-first strategy that doesn't account for this will reproduce and scale existing inequalities. It's important to test and make sure the AI works fairly for different types of people.

III) The dependency problem

When companies use AI to handle most customer conversations, what happens to the skills and routines that people used to do? It's better to create systems where AI helps humans, rather than replacing them entirely. This way, humans stay involved in tricky or emotional cases, which leads to better results and keeps important knowledge that AI alone can't capture.

IV) The data problem

Voice interactions generate extraordinarily rich data, not just what was said but how it was said, including emotional state and hesitation. How that data is stored, used, and protected needs explicit governance. Privacy-by-design isn't a compliance exercise, it's a trust investment.

Moving Forward

The future of customer service is AI-driven, but responsible adoption is non-negotiable. Practical steps include:

- Embedding clear disclosure at the start of every interaction

- Conducting regular bias audits with diverse evaluators

- Designing seamless escalation paths to human agents

- Implementing data minimization and privacy-by-design principles

By combining AI efficiency with human judgment, businesses can deliver superior customer experiences while upholding trust and expertise.

Design Better Customer Conversations with Conversive Voice AI

The technology is ready, and the shift in customer behavior is undeniable. Start small, iterate quickly, and scale strategically. Test a voice-first AI agent with a segment of your audience, measure what works, and refine the conversation flow for maximum impact.

Most importantly, design for humans, not just technology. Voice AI is a tool, an incredibly powerful one, but the ultimate goal is meaningful and trustworthy interactions that delight your customers.

If you’re exploring how voice-first design can transform your customer journeys, we help organizations move from experimentation to real-world deployment, connecting strategy, platform integration, measurable outcomes, and continuous optimization through voice and AI. Book a demo today!

Frequently Asked Questions (FAQs)

1. What is voice-first design, and how is it changing customer experiences?

Voice-first design prioritizes natural spoken interactions as the primary interface, supported by text and visuals. It reduces friction and helps customers get answers in real time without navigating complex systems.

2. Which industries benefit most from voice-first AI solutions?

High-volume, customer-facing sectors like financial services, healthcare, education, and real estate are seeing the biggest gains. These industries handle frequent inquiries where speed and personalization directly impact customer loyalty and revenue.

3. How does voice AI handle accents and regional language variations?

Modern voice AI systems use advanced speech recognition tuned for regional accents and multilingual support. This ensures inclusivity and consistent service quality for users from different geographic regions or linguistic backgrounds.

4. Are voice-first interfaces secure and privacy-compliant?

Yes, responsible voice-first systems implement privacy-by-design, data minimization, and explicit consent disclosure. They also follow regulatory guidelines to ensure compliance and build customer trust across all interactions.

5. Can small businesses benefit from voice-first customer engagement?

Absolutely! Local businesses use voice AI for bookings, inquiries, support, boosting accessibility and efficiency. Even small teams can scale customer support and reduce wait times, improving overall satisfaction and repeat business.

6. What measurable benefits can Conversive Voice AI provide for improving customer experiences?

Conversive Voice AI helps reduce response times, improve first-call resolution, and increase customer satisfaction. It also frees human agents to focus on complex or sensitive interactions while AI handles repetitive inquiries.

7. How quickly can businesses implement Conversive Voice AI into their customer support workflow?

Conversive Voice AI can be tested with a segment of your audience to start small and iterate fast. It integrates with existing systems, allowing seamless deployment without major workflow disruptions. Businesses can scale the solution gradually, optimizing conversations based on real-time data and insights.

Explore More

.png)

.png)